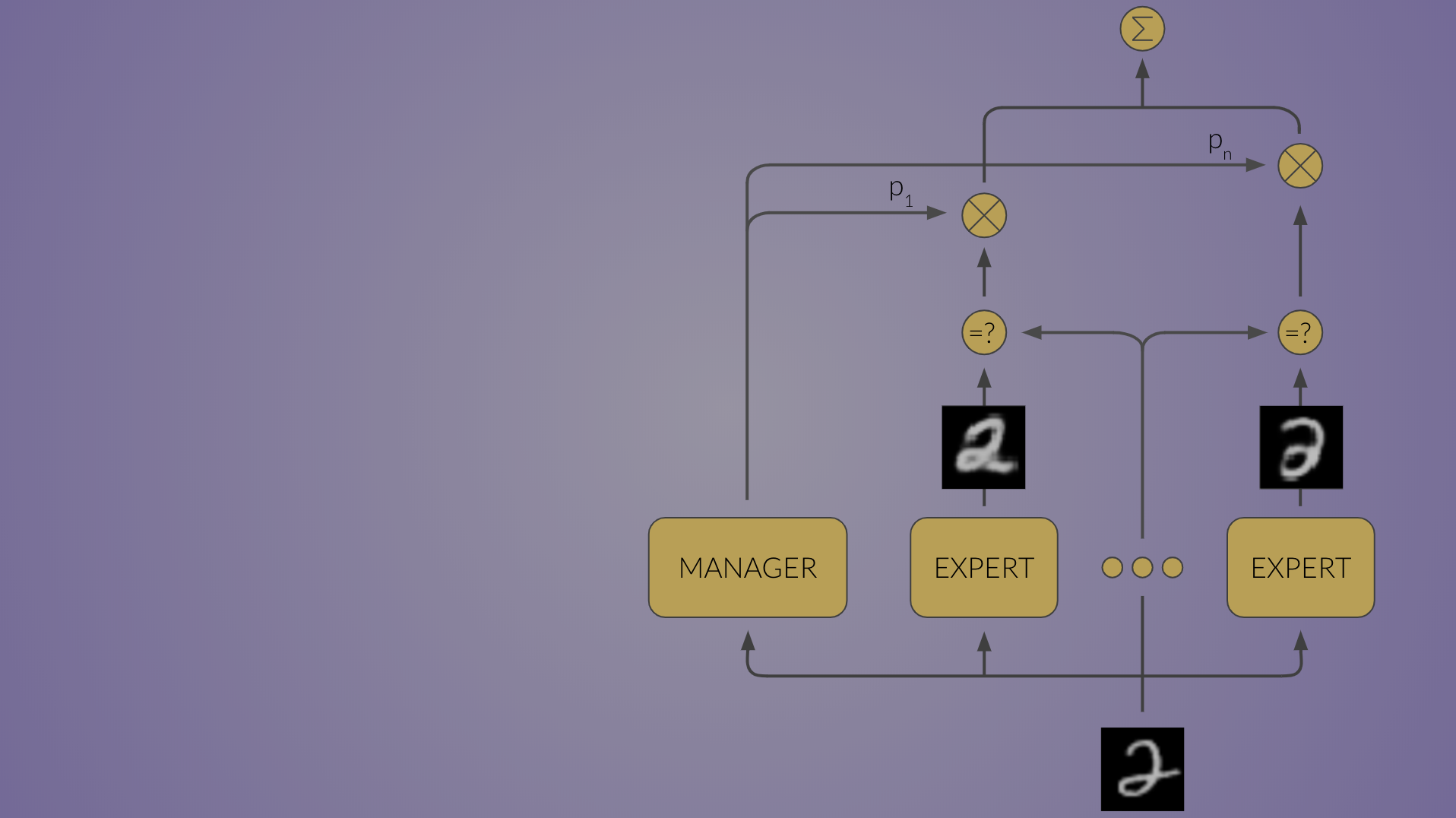

IntroductionDeploying machine learning models with Flask offers a seamless way to integrate predictive capabilities into web applications. Flask, a lightweight web framework for Python, provides a simple yet powerful environment for serving machine learning models. In this article, we explore the process of deploying machine learning models using Flask, enabling developers to leverage the full potential of their predictive algorithms in real-world applications.What is Model Deployment and Why is it Important?Model deployment in machine learning integrates a model into an existing production environment, enabling it to process inputs and generate outputs. This step is crucial for broadening the model’s reach to a wider audience. For instance, if you’ve developed a sentiment analysis model, deploying it on a server allows users worldwide to access its predictions. Transitioning from a prototype to a fully functional application makes machine learning models valuable to end-users and systems.Deploying machine learning models can not be understated!While accurate model building and training are vital, their true worth lies in real-world application. Deployment facilitates this by applying models to new, unseen data, bridging the gap between historical performance and real-world adaptability. It ensures that the efforts put into data collection, model development, and training translate into tangible benefits for businesses, organizations, or the public.What are the Lifecycle Stages ? Develop Model: Start by developing and training your machine learning model. This includes data pre-processing, feature engineering, model selection, training, and evaluation.Flask App Development (API Creation): Create a Flask application that will serve as the interface to your machine learning model. This involves setting up routes that will handle requests and responses.Test & Debugging (Localhost): Test the Flask application on your local development environment. Debug any issues that may arise.Integrate Model with Flask App: Incorporate your trained machine learning model into the Flask application. This typically involves loading the model and making predictions based on input data received through the Flask endpoints.Flask App Testing & Optimization: Further test the Flask application to ensure it works as expected with the integrated model. Optimize performance as needed.Deploy to Production: Once testing and optimisation are complete, Deploy the Flask application to a production environment. This could be on cloud platforms like Heroku, AWS, or GCP.+—————–+ +——————+ +——————-+ | | | | | | | Develop Model +——>+ Flask App Dev +——>+ Test & Debugging | | | | (API Creation) | | (Localhost) | +——–+——–+ +———+——–+ +———+———+ | | | | | | | | | +——–v——–+ +———v——–+ +———v———+ | | | | | | | Model Training | | Integrate Model | | Flask App Testing | | & Evaluation | | with Flask App | | & Optimization | +——–+——–+ +———+——–+ +———+———+ | | | | | | | | | +——–v——–+ +———v——–+ +———v———+ | | | | | | | Model Selection | | Flask App | | Deploy to | | & Optimization | | Finalization | | Production | | | | | | (e.g., Heroku, | +—————–+ +——————+ | AWS, GCP) | | | +——————-What are the Platforms to Deploy ML Models?There are many platforms available for deploying machine learning models. Below are given some examples: Django: A Python-based framework that offers a lot of built-in features making it suitable for larger applications with complex requirements.FastAPI: A modern, fast (high-performance) web framework for building APIs with Python 3.6+ based on standard Python type hints. It’s gaining popularity for its speed and ease of use, especially for deploying machine learning models.TensorFlow Serving: Specifically designed for deploying TensorFlow models, this platform provides a flexible, high-performance serving system for machine learning models, designed for production environments.AWS SageMaker: A fully managed service that provides every developer and data scientist with the ability to build, train, and deploy machine learning models quickly. SageMaker handles much of the underlying infrastructure and provides scalable model deployment.Azure Machine Learning: A cloud service for accelerating and managing the ML project lifecycle, including model deployment to production environments.In this article we are going to use Flask to deploy a machine learning model.What is Flask?Flask, a lightweight WSGI web application framework in Python, has become a popular choice for deploying machine learning models. Its simplicity and flexibility make it an attractive option for data scientists and developers alike. Flask allows for quick setup of web servers to create APIs through which applications can communicate with the deployed models.This means that Flask can serve as the intermediary, receiving data from users, passing it to the model for prediction, and then sending the response back to the user. Its minimalist design is particularly suited for ML deployments where the focus is on making a model accessible without the overhead of more complex frameworks. Moreover, Flask’s extensive documentation and supportive community further ease the deployment process.Which Platform to use to Deploy ML Models?The choice among different platforms should be based on the specific needs of your project, including how complex your application is, your preferred programming language, scalability needs, budget constraints, and whether you prefer a cloud-based or on-premise solution.For beginners or small projects, starting with Flask or FastAPI can be a good choice due to their simplicity and ease of use.For larger, enterprise-level deployments, considering a managed service like AWS SageMaker, Azure Machine Learning, or Google AI Platform can provide more robust infrastructure and scalability options.Flask often stands out for its simplicity and flexibility, making it an excellent choice for small to medium-sized projects or as a starting point for developers new to deploying machine learning models.ML Model: Predict the Sentiment of the Texts/TweetsBefore performing the latter step of deploying, we first need to make a machine learning model. The model we are building aims to predict the sentiment of the texts/tweets. Preparing a sentiment analysis model is an important step before deployment, involving several stages from data collection to model training and evaluation. This process lays the foundation for the model’s performance once deployed using Flask or any other framework. Understanding this workflow is essential for anyone looking to deploy their machine learning models effectively.Steps to Deploy a Machine Learning Model using FlaskThe following steps are required: Step 1: Data Collection and PreparationThe first step in developing a sentiment analysis model is gathering a suitable dataset. The dataset should consist of text data labeled with sentiments, typically as positive, negative, or neutral. This includes removing unnecessary characters, tokenization, and possibly lemmatization or stemming to reduce words to their base or root form. This cleaning process ensures that the model learns from relevant features. We are using the tweets data which is available over the net. In the problem of classifying the tweet/text, we are using the data storewhich contains 7920 values, the tweet column – which contains all the tweets and and a label column with values 0 and 1, where 0 stands for negative and 1 stands for positive. def preprocess_text(text): # Convert text to lowercase text = text.lower() # Remove numbers and punctuation text = re.sub(r’\d+’, ”, text) text = text.translate(str.maketrans(”, ”, string.punctuation)) # Tokenize text tokens = word_tokenize(text) # Remove stopwords stop_words = set(stopwords.words(‘english’)) tokens = [word for word in tokens if word not in stop_words] # Lemmatize words lemmatizer = WordNetLemmatizer() tokens = [lemmatizer.lemmatize(word) for word in tokens] # Join tokens back into a string processed_text=” “.join(tokens) return processed_text#import csvAfter preprocessing, the next step is feature extraction, which transforms text into a format that a machine learning model can understand. Traditional methods like Bag of Words (BoW) or Term Frequency-Inverse Document Frequency (TF-IDF) are commonly used.These techniques convert text into numerical vectors by counting word occurrences or weighing the words based on their importance in the dataset, respectively. For our model, we are only taking one input feature and corresponding output feature.Model ArchitectureThe choice of model architecture depends on the complexity of the task and the available computational resources. For simpler projects, traditional machine learning models like Naive Bayes, Logistic Regression, or Support Vector Machines (SVMs) might suffice. These models, while straightforward, can achieve impressive results on well-preprocessed data. For more advanced sentiment analysis tasks, deep learning models such as CNNs or Recurrent Neural Networks RNNs, including LSTM are preferred due to their ability to understand context and sequence in text data. We are using logistic regression for sentiment classification problem.Training the ModelModel training involves feeding the preprocessed and vectorized text data into the chosen model architecture. This step is iterative, with the model learning to associate specific features (words or phrases) with particular sentiments. During training, it’s crucial to…

How to Deploy a Machine Learning Model using Flask?

Sign Up for Our Newsletters

Get notified of the best deals on our WordPress themes.